Carsten Nicolai is a man of many artistic guises, effortlessly leaping from art installations at the Venice Biennale to Barbican concerts and club ready techno sets at Tokyo’s Contact, whilst also running the visionary imprint NOTON.

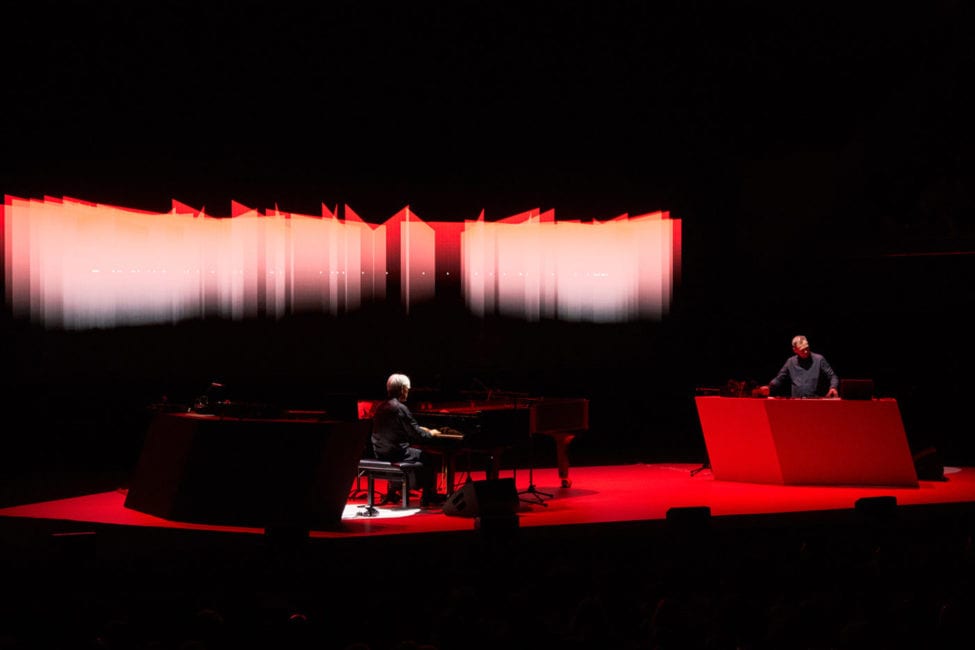

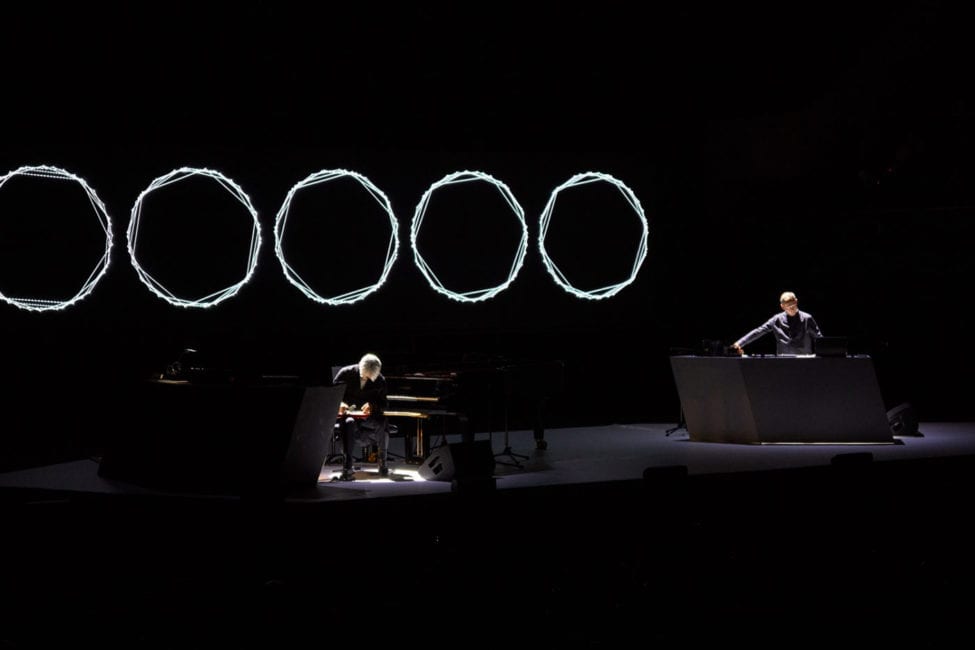

2019 has been a busy year under his musical guise Alva Noto with a new improvisational album ‘TWO‘ recorded live with Ryuichi Sakamoto at the Sydney Opera House – Sakamoto playing piano, Nicolai providing electronics. Following this, a collaborative work with sound poet Anne-James Chaton as Alphabet, exploring the intersection of language and sound.

We caught up with the Berlin based artist prior to Alphabet’s London performance at St Luke’s Church, Old Street to find out more about these releases, photoelectronic synths, whether language or music came first and where exactly all of this started.

Interview by Andy Gillham

"More and more we create space where we don't control each other... we just let it go and wait until something appears and that actually makes us both more relaxed."

Your work inhabits lots of different worlds – music, maths, science, visuals, cymatics, sound design. Was there a pivotal moment in your life that led you to this intersection or did you start in one of those areas and then organically grow into the others?

I think there was a moment around the early ’90s when the wall came down (as I lived in East Germany) and for the first time I had the possibility to travel. I started getting interested in electronic music and in the same period of time myself and some friends kind of squatted an old brewery – a really, really large complex of buildings, something like six or seven buildings.

First we took just one building. I was running a gallery with friends – a non-profit, artist run arts space. Other friends of mine had been running an independent cinema and we united to form….an arts centre is not the right description…as it was more of a private place. So we created this wonderful space that had exhibitions, a cinema, a bar, a club and in the same time within the complex we had our studio.

It was a very crucial moment for me as I was much more interested purely in visual art….I was always interested in theatre, cinema and music and here we combined everything by inviting club artists from the techno world and more avant-garde artists all in the same house. Through this I felt that the musical aspect was kind of missing in my life – I was always a listener rather than a producer.

This was the period that I founded NOTON and started making music, electronic music on the more avant-garde edge, meeting artists who became my friends later like Ryoji Ikeda, Panasonic, Tommi from Sähkö, Touch from London. It was a really beautiful scene and community. It was a small, condensed scene but the people who worked in this field of music were really supportive of each other.

How do you find the scene now given so much has changed since that period? There’s a lot more accessibility to equipment and music in general – how has this affected you as an artist or from a label perspective?

I call it the “liberation of production tools“, which many artists had concerns about having a bad impact but I think it’s had a very good impact as it’s given me the possibility to start producing music. I compared it with the punk movement where people went to a concert, saw the energy and thought ‘I want to do this‘…’I can do this too‘.

You activate a lot of people to be producers from listeners. If you activate people to be more active this is always a good thing. It’s a great liberation, particularly in electronic music as the tools are very easy to access.

When it comes to your sound palette…the types of sounds you use are very pure such as high and low frequency sine waves. When it comes to the tools and equipment you use, do you use hardware or are you software based?

In the beginning, digital tools were not that great so you started with whatever equipment was available. There was a moment when all the Universities in East Germany got all new equipment because of the political change, so they kicked out all their old equipment.

A classmate of mine had become a teacher in the Department of Physics at the Technical University so I gave him a call and asked him if he had any equipment that produces sounds in the range of hearing. He said come over as we’re just throwing everything out. So I went to these huge garbage bins and filled my car full of equipment – from oscilloscopes to oscillators.

When you receive these types of oscillators they are not limited in terms of frequency – it’s not like a synthesizer. You have an oscillator that goes from 0.1hz to maybe 40 or 50k…much higher than the audible range. This equipment was not necessarily produced for making sound…in classical synths these super high and low frequencies are not necessary.

Starting from this point I was influenced a lot by local artists who had been experimenting with sound on a totally different level. It was more based on language. There was one specific guy who was really interested in how sound becomes language…tracing language back to the most primitive and basic vocalisations. It was very experimental and profound research on what is actually language and sound.

In a way I took this approach around what is a frequency, what is a sound and how we perceive them. I was super interested in high and low frequencies. I didn’t believe that our hearing ends at 20,000hz. If we don’t hear it, maybe we feel it so I was experimenting in this kind of field. I was not really interested in music but much more interested in finding what is sound, and what makes sound, sound.

"There was a big shift when Ryuichi said he didn't want to play existing pieces anymore, he'd love to do newer pieces. It's much more interesting for us to create new things every time"

I was reading something recently in David Byrne’s book ‘How Music Works’ about an experiment where audio was recorded of speakers of various languages and just the vowel sounds that are common amongst humanity were extracted… leaving kind of tones which also sat within the twelve notes of the chromatic scale. I guess they were trying to work out if language became music….”

Or the opposite! I mean there is always an intersection. It’s very interwoven sound and language. Language has much more complexity as it has a much stronger social aspect – we are simply communicating with language. But in one way or the other way we are communicating with music too…on a totally different level, not on an intellectual level.

A kind of emotional level?

It works without our understanding. This is one of the most beautiful and striking things…of course there is always this big need of ours that we want to understand why, but actually I think it’s not possible to understand 100%.

Your work with Ryuichi Sakamoto feels like a very symbiotic relationship – a kind of exercise in restraint from two different approaches to music. How hard or easy was it for you to meet in the middle? Did it just kind of happen or was it through a number of stages?

It was through a number of stages and how we met for the first time. Everything for me was defined by frequency and time. Rhythm was loop lengths, I didn’t care about divisions, or a clock or bars…bars didn’t exist for me..it was just a grid system.

I was not into scales, just frequency. For me the basic frequency was 50hz because it’s the same way as electricity is oscillating..so for me the most basic tone is 50hz – so I always started with 50hz in a way.

I realised when I started working with Ryuichi that my scale was not working with his scale because he worked with a classical notation system. The closest to an ‘A’ is 55hz…so 5hz more than my scale! I needed to re-tune everything! The piano can be tuned of course but there are some instruments you cannot tune like bells because they are set. If you work with any of these instruments, they give the tuning.

I have to say Ryuichi understood what I was doing so he helped me saying ‘listen, you are out of tune‘. He would say it’s ‘A’ and I was like, what does it mean ‘A’?! So I basically had lists of numbers – A is 55 or 110 and I had to re-tune everything from the start.

In the very beginning when I started working with Ryuichi’s sounds from the piano I had not analysed it so much, I did it all by hearing. When Ryuichi says a bar is 5/4..what does this mean to me.. it doesn’t say anything to me…so this was maybe the beginning. But in the same way Ryuichi moved away from classical, he became much more interested in improvisation and detuning. So in a way we kind of moved together step-by-step….it took me a while to understand.

In your live set up when you play with Ryuichi, I’ve read you use Max for Live software tools for real time analysis enabling you to make on the fly choices. How much is your live set based around this kind of experimentation?

In the beginning there were a few things like this, for the first albums. Then of course there is the problem that in the studio you have a lot of time and work offline. For live performances you have to develop something that allows you to do the same thing that you’ve been doing in the studio, but in the moment. That’s the reason we built these instruments.

Nowadays I don’t use much of this MaxMSP stuff. We work with more stand alone instruments. Having our own equipment with all their possibilities… then just playing it and improvising. This is a new thing for ‘Two’ – we want this level of improvisation.

And how do you synchronise, is it via a click track or all done by ear…because everything is amazingly in time.

Nothing! Some pieces need to be in time…we have a clear strategy and we watch each other…we communicate with our eyes. Some pieces are written down so we know where the changes are but these are pieces that existed that we’ve played for a long time like ‘Berlin‘ or ‘Trioon‘ so we stick to the original.

More and more we create space where we don’t control each other…we just let it go and wait until something appears and that actually makes us both more relaxed. It’s more surprising and makes it more fun but it is risky.

The first time we played like this we tried to create a similar theme to the records but we are no longer interested in this idea. We recorded these five albums that became…as we call them, the Virus series..the first letters of the title of each album (Vrioon, Insen, Revep, utp_, Summvs) spell this word – Virus.

After we finished this Ryuichi had his cancer treatment and we stopped any recordings and playing together and we didn’t know if he would ever start again. There was a big shift when Ryuichi said he didn’t want to play existing pieces anymore, he’d love to do newer pieces. It’s much more interesting for us to create new things every time.

So we did this performance in The Glass House with contact mics, utilising the architecture and it was really exciting for us and we thought this was a possible way for us to continue working. We are trying to make half of any performance an improvisation.

"Ryuichi moved away from classical, he became much more interested in improvisation and detuning. So in a way we kind of moved together step-by-step....it took me a while to understand"

What musical instrument have you always wanted if money were no object?

There are two instruments that I love from the past. One is the Theremin – I still think the Theremin has so much opportunity to develop. As pure as it is…it’s very beautiful but very difficult to play…you need to have a lot of skills and knowledge that not many people have. I love this instrument.

There is another instrument, Russian built..the ANS synthesizer developed in the ’50s / ’60’s based on picture analysis…before computers. If I had the time I would love to recreate that instrument because only one exists in a museum in Moscow and it doesn’t work any more. All the tubes and wiring are not great. I played it once and I think it’s fantastic to play with.

What can we expect from your performance with Anne-James Chaton?

There was a long wish to make a record with Anne-James because I’ve known him for so long now and since the first time I met I have been fascinated with his stuff – there was always this collaborations on my solo albums with him…one or two tracks.

We decided to make a full record together, from scratch. In a way it’s a continuation of the previous tracks – joining my sound with his vocals. It’s a kind of conceptual electronic record in a way but the setting looks a classical synth band from the ’80s ..one guy on the computer, one silent guy…a bit like the Pet Shop Boys!

I like this constellation from the ’80s as I grew up during that period…I think it still works. He’s a performer with his voice….you could say singer even though he’s more reading rather than signing…his voice has a great musical aspect. The text is called Alphabet. It starts from A – Z with 14 different pieces and sections with different topics.

With the album we have included a book of the texts as they are quite abstract…but at the same time it’s a collection of how language develops, like we spoke about before. It’s a conceptual record but still works as music I think – it has both qualities.

And what are your plans for next year?

Right now I’m starting to record ‘Xerrox Volume 4’ – I’ve already started working on it for a couple of months….the obsession with copying and more melodic ambient non rhythmic stuff. I would love to finish that by the end of the year and then maybe put it out by the end of March 2020.

They seem to be getting more melodic these Xerrox releases?

Yes, at the beginning they were more experimental and noisy. Volume 3 was much more melodic in a way and less experimental. I became very interested in more of a cinematic experience of the Xerrox pieces so I’ve been creating something that would work like a soundtrack and I think this is the reason as well that I was involved in ‘The Revenant‘ soundtrack. We are planning a really interesting show for Xerrox in London in 2020, I’m not sure if I can mention it but…

‘TWO – Live at The Sydney Opera House’ is out now via Noton. ‘Alphabet’ is out now, also via Noton.

TRACKLIST

1. Inosc

2. Propho

3. Trioon II (Live)

4. Scape I

5. Berlin (Live)

6. Scape II

7. Morning (Live)

8. Iano (Live)

9. Emspac

10. Kizuna (Live)

11. Gitrac

12. Monomom

13. Panois

14. Naono (Live)

15. The Revenant Theme (Live)

Discover more about Alva Noto and NOTON on Inverted Audio.